Sustainable Investing: How Data Science can Improve Reliability of Reported Data

Clarity AI standardizes ESG data to establish a reliable database prior to the implementation of CSRD reporting

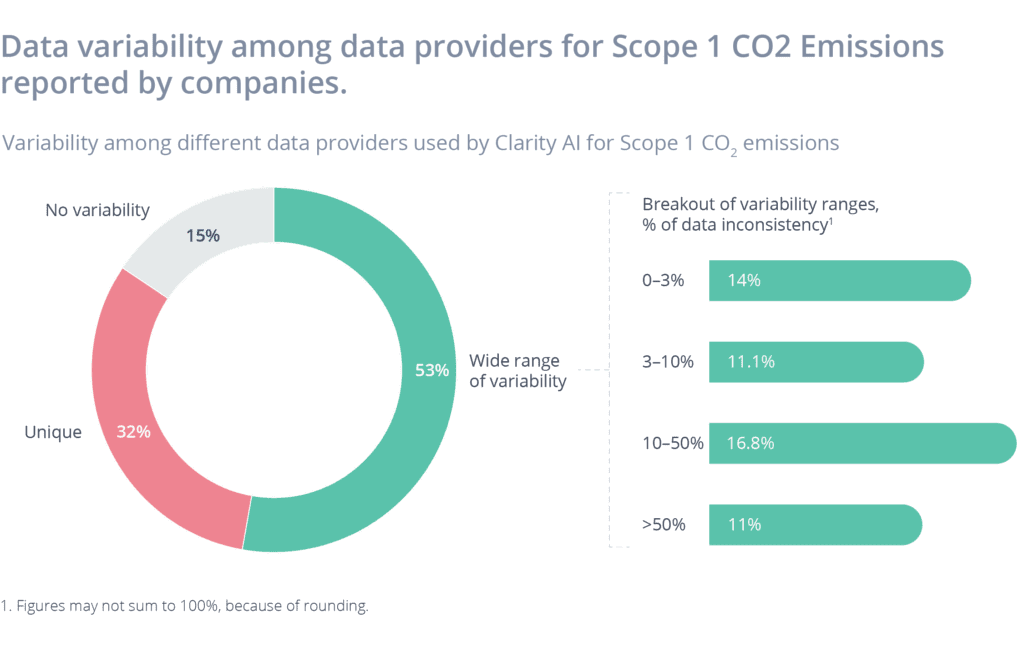

Sustainability performance data are still in their early days. The Corporate Sustainability Reporting Directive (CSRD) will eventually make these data part of companies’ annual reports with third- party auditing. However, CSRD will not be fully implemented until 2025, and in the meantime, limited reliability in reported data is to be expected. This limited reliability applies even to broadly used quantitative metrics such as Scope 1 CO2 emissions, which —despite being a highly material metric—can suffer from high variability among data sources. This due to errors, lack of standardization, and overall poor data quality from provider to provider. This is true even when dealing with data reported by the companies themselves. The higher the variability, the less reliable the data.

Clarity AI leverages three key differentiators to establish the most reliable database available today:

- Assemble the largest collection of structured and unstructured data sources in a global database.

- Use in-house and external technical data expertise to aggregate, clean, and standardize this database.

- Leverage proprietary machine-learning algorithms and data science techniques to detect outliers and automatically select the best source for overlapping data, as well as to obtain accurate estimates for non-reported data.

Data Sources

Clarity AI draws on more than two million data points of various types (for example, quantitative, qualitative, and news). It has proprietary data from machine-learning models that estimate metrics to complement organizations’ non-disclosed information, and exclusive data sources from Clarity AI partnerships with worldwide, recognized data providers (for example, for controversial news) allow deeper and richer insight generation.

Technical Data Expertise

Clarity AI’s data engineering and DevOps teams are experts in data life-cycle management, and they leverage bleeding-edge technology and tools for automated data ingestion, processing, validation, and storage. Our team can expertly clean and standardize a company’s other data, classifying it into peer groups and identifying key operating metrics.

Artificial Intelligence

Confirmed data are great; triple-confirmed data are better. Clarity AI uses its multiple sources, as well as overlapping coverage of key metrics, to ensure data consistency and reliability. To remove potential inconsistencies within this consolidated database, Clarity AI’s proprietary machine-learning algorithms choose the best sources and detect outliers just as an analyst would do based on domain expertise—but at scale and without human bias.

Case Study

The number for Salesforce’s 2019 Scope 1 CO2 emissions was reported inconsistently in a variety of data sources. Two data providers offered a value of 5,800 tons. A third provider said 5,000 tons, and a fourth reported 50,000 tons. Clarity AI’s algorithm concluded that the 5,000-ton value was the most reliable, and this conclusion was then backed up by Salesforce’s own annual report.